Tesla’s Invisible Moat: The Most Elegant Physical AI Training Program Ever Built

- Alex Kopel

- May 1

- 17 min read

Three structural advantages that make Tesla’s moat deeper than the numbers suggest — and why they’re almost impossible to replicate.

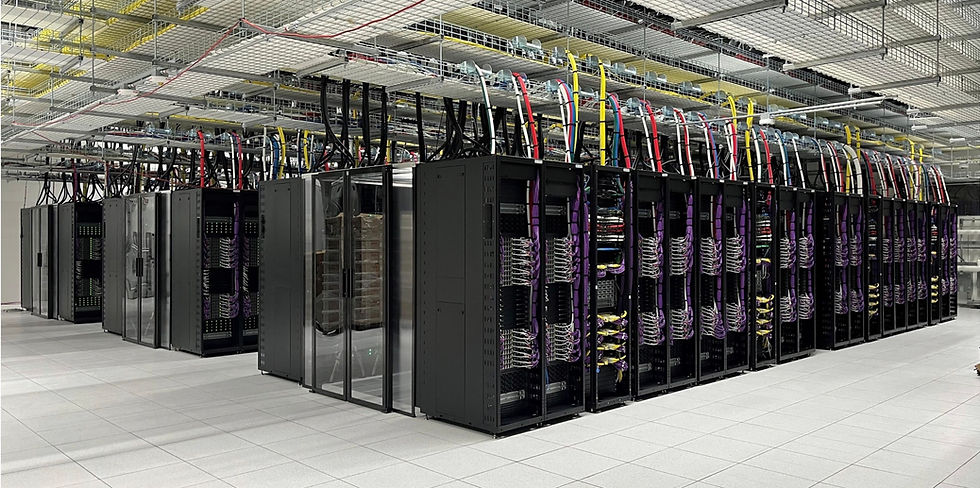

Tesla Cortex 2 — AI Training Cluster. This is where the moat is being built.

We went through Tesla’s Q1 2026 earnings call. A lot of the coverage focused on the HW3 FSD situation — 4 million vehicles sold between 2019 and 2024 with promises of full self-driving capability that, Musk now confirmed, those vehicles simply cannot deliver. The hardware lacks the memory bandwidth. A costly swap involving both the computer and cameras is required. No timeline, no pricing, and the custom retrofit chip doesn’t even exist yet. Class action exposure is real.

That’s a legitimate near-term risk and we don’t dismiss it.

But buried deeper in the transcript was something more revealing. When asked whether V14.3 was the final piece needed for large-scale unsupervised FSD, Musk said essentially yes — and Ashok noted the Robotaxi fleet has recorded zero incidents to date. But Musk was equally direct about what comes next: it simply wouldn’t make sense to deploy at very large scale when they already know major architectural improvements are in the pipeline that will significantly improve both safety and capability. V15 is not a regulatory hurdle Tesla needs to clear. It is a self-imposed standard.

Read that carefully. Tesla has a version of FSD working well enough to deploy commercially today — already running unsupervised in three cities. But they’re consciously choosing not to go to massive scale yet because a better version is coming.

That’s responsible. It’s also the moment we started thinking harder about who, exactly, is paying for V15 to exist.

The Customers Are the Product

Here is the thesis in its simplest form:

When a customer buys a Tesla and pays $10,000–$15,000 for Full Self-Driving, they are not just buying a software feature. They are, knowingly or not, enrolling themselves as a data generation node in the world’s largest autonomous driving training program. Every mile they drive on FSD — every near-miss, every unusual intersection, every moment the system hesitates and the driver corrects — flows back to Tesla’s servers and into the neural network that becomes the next version of FSD.

The customers bearing the cost of building this moat are the same people generating the data that makes the moat deeper. They are simultaneously Tesla’s revenue source and Tesla’s R&D infrastructure.

This is not an accident. It is the strategy.

Tesla has approximately 4 million FSD-enabled vehicles on the road today. The fleet is collectively driving an estimated 30–40 million miles on FSD every single day. Tesla is approaching 10 billion cumulative miles of FSD training data. For context, Waymo — the category leader in fully driverless commercial operation after 17 years of work — has approximately 200 million fully autonomous miles.

Tesla’s customers have generated roughly 50 times more training data, and they paid Tesla to do it.

We’ve seen this dynamic before. It reminded us of something.

The Meta Parallel

We’ve been investors in Meta since 2018 — it has been our largest holding for several years and has compounded at over 19% annually since our initial purchase. We stayed through the controversies, the regulatory hearings, the metaverse skepticism, and the 2022 collapse when the stock lost more than 75% of its value and some of the most respected investors in the world were selling. We didn’t. The reason we got involved — and stayed involved — was a simple observation: Meta had built a network effect that compounded in their favor every single day.

Every new user who joined Facebook or Instagram made the platform more valuable to every existing user. And crucially, every new user made it harder for a competitor to replicate the network. The moat didn’t just exist — it widened automatically as the business grew. By the time anyone understood the magnitude of what Meta had built, it was effectively too late to displace it.

What Tesla has built is the same dynamic applied to AI training.

Every Tesla sold adds another data-generating node to the network. Every mile driven makes the training dataset richer and more diverse. Every edge case encountered in Topeka or Taipei that the system learns from is an edge case no competitor has seen. You cannot buy, simulate, or shortcut your way to 10 billion miles of real-world autonomous driving data across every climate, road type, and driving culture on earth.

The moat doesn’t just exist. It widens by approximately 30–40 million miles every single day. Meta’s users give their data in exchange for a free service. Tesla’s customers pay $10,000–$15,000 for the privilege of generating it. If Meta’s model is elegant, Tesla’s is something else entirely.

Why the Moat Is Deeper Than the Market May Realize

Meta deploys to over three billion users and something breaks. Zuckerberg sees the data the next morning, a fix ships within days, and the iteration loop closes quickly. The product gets better through public testing and that is not just acceptable — it is the strategy. Move fast. Ship. Learn. Repeat. The progress is visible every quarter in engagement metrics, daily active users, and advertising revenue. The cost of a bad deployment is categorically different from Tesla’s.

Tesla’s failure mode is a human being dying on a public road.

That single difference changes everything about the development cycle — and explains almost entirely why a company with 10 billion miles of training data, its own chips, its own supercomputers, and a functioning unsupervised FSD system already running in three cities has yet to produce the evidence that justifies the market’s ambition for it.

Tesla cannot use its customers as beta testers the way every software company does. It cannot ship V15 to a million cars, watch what breaks, and patch it next week. Every improvement has to be validated internally — in simulation, across a dedicated QA fleet spread across the entire United States, stress-tested against every scenario the system might encounter — before it ever touches a paying passenger. The iteration loop that takes Meta hours takes Tesla months or longer.

Musk made this explicit on the Q1 call in a moment that deserves more attention than it received. When asked about the key safety metrics gating Robotaxi expansion, he clarified that the primary bottlenecks are not safety issues in the traditional sense at all. They are what he called convenience issues — the car getting “scared” to cross a railroad track, getting stuck at a light that never changes, or circling a block in an infinite loop because construction is blocking a turn. Twelve Tesla Robotaxis in Austin got stuck waiting behind a Waymo that had crashed into a bus. The bus wasn’t moving. The Robotaxis didn’t know what to do.

In any other software product, that bug gets reported by users, triaged by engineers, and fixed in the next deploy. For Tesla, that edge case has to be caught in internal testing before the car is ever in that situation with a paying passenger — because the consequences of public failure are not a one-star review. They are a regulatory halt, a congressional investigation, and potentially years of setback to the entire autonomous vehicle industry.

This creates a profound asymmetry between the speed at which Tesla’s capabilities are actually advancing and the speed at which that advancement is visible to outside observers. The internal progress is continuous. The training data accumulates daily. V14.3 works. V15 is in development with major architectural improvements. Terafab is under construction. AI5 has taped out. But none of it shows up in the revenue line until the safety validation threshold is crossed and deployment happens at scale. So the market sees a company that keeps promising a future that keeps being one version away — when the more accurate description is a company that is genuinely advancing its capabilities rapidly but is structurally prevented from demonstrating that progress publicly until it meets a safety bar that has no equivalent in software.

There is a deeper irony here. The very constraint that makes Tesla’s timeline so frustrating to investors — the inability to iterate publicly, the necessity of getting it right before deploying it widely — is also part of what makes the moat so durable once it is established. Any competitor that tries to shortcut the safety validation process risks the kind of catastrophic public failure that ended Cruise in 2023. The bar is so high, and the reputational and regulatory consequences of clearing it imperfectly so severe, that only companies with extraordinary data scale, deep pockets, and genuine patience can stay in the game long enough to cross it.

This is, we think, the central reason the moat is likely underpriced. It is not that investors don’t believe in autonomous vehicles. It is that the infrastructure behind Tesla’s progress — the training data compounding daily, the chip architecture evolving, Terafab being built — is entirely invisible, while the challenges — missed timelines, HW3 obligations, hardware obsolescence cycles — surface publicly through earnings releases, regulatory filings, litigation, and analyst scrutiny.

The moat widens in silence. Musk talks up the vision on every earnings call. But the architecture being assembled underneath that vision is what the market has yet to fully price, in our view.

Why Competitors Cannot Replicate This

The question we always ask about a moat is: what would it take for a well-funded competitor to close the gap?

For Tesla’s data flywheel, the answer is genuinely daunting. To replicate Tesla’s training pipeline a competitor would need to simultaneously sell millions of vehicles, convince millions of customers to pay for an autonomous driving package, deploy that fleet across diverse global geographies, and build the AI infrastructure to process it — all while Tesla’s existing fleet continues to widen the gap daily.

Waymo has 3,000 vehicles. GM’s Cruise collapsed after a serious accident in 2023. Ford and VW shut down Argo AI. Every major automaker that attempted to build this internally has failed, partnered, or retreated.

The most credible competitive threat comes from China — and specifically from Xpeng, which deserves more attention than it typically receives in Western investment analysis. Xpeng has built a vision-based, end-to-end neural network approach architecturally similar to Tesla’s, trained it on Chinese road data at meaningful scale, developed its own proprietary AI chip, and as of early 2026 is producing results that have surprised even skeptical Western analysts. BYD — despite being the world’s largest EV maker — is focused on basic driver assistance rather than true autonomy. Huawei is investing heavily but relies on expensive LiDAR-based hardware and fragmented data collection across partner automakers rather than a unified fleet. The structural constraint for all three remains the same: their data is overwhelmingly Chinese, and the regulatory and cultural barriers to deploying globally mean Tesla’s Western market moat remains largely unthreatened for now. But Xpeng is building something real — and watching how quickly they close the gap on Tesla in China is the most important competitive data point to monitor.

The one theoretical path to leapfrogging Tesla’s data moat would be a breakthrough in synthetic data generation — training AI on simulated rather than real-world miles so effectively that real-world data becomes less important. This is theoretically possible. But nobody has proven it works at the level required for unsupervised FSD. And Tesla itself uses simulation heavily alongside real-world data, meaning they would benefit from that breakthrough too.

The Cost Structure That Changes Everything

There is a second dimension to Tesla’s moat that we think is equally underappreciated: the unit economics of the Robotaxi business, when it scales, will be structurally difficult for Waymo to compete with.

Waymo’s model requires expensive purpose-built vehicles — their Jaguar I-Pace fleet costs roughly $70,000 per car before adding the sensor suite. LiDAR, radar, extensive pre-mapping of every new city, remote operators monitoring the fleet, and fleet management partnerships in each market. Their current operating cost runs approximately $1.36–$1.43 per mile.

Tesla’s Cybercab is targeting under $30,000 with no LiDAR, no pre-mapping requirement, and full vertical integration across chip design, software, manufacturing, and the service network. Current real-world data already shows Tesla’s Robotaxi operating at roughly $0.81 per mile — with a safety monitor still present. Fully unsupervised, credible estimates put Tesla’s cost somewhere between $0.20 and $0.40 per mile at scale.

At $0.25 per mile, personal car ownership becomes economically irrational for a significant portion of the population. That is not a taxi market or a ride-hailing market. That is a complete restructuring of how urban transportation works globally. Waymo’s cost structure, even with their new Zeekr RT platform, does not credibly get there.

Terafab: From Silicon to Street

To understand Terafab properly, you have to understand something about how Tesla became Tesla.

Vertical integration was never the original plan. When Musk set out to build electric cars, the intent was to do what every other automaker does — design the vehicle and outsource the components. Buy batteries from suppliers. Source electronics from vendors. Contract manufacturing where possible. That’s the established playbook and it keeps capital requirements manageable.

It didn’t work. Not because the suppliers were unwilling, but because they couldn’t meet Tesla’s standards for quality, pace, and first-principles engineering at the scale Tesla needed. Time and again, Tesla would go to an outside supplier for a critical component — seats, battery management systems, power electronics, body panels — and find that the supplier’s timeline was too slow, their quality inconsistent, or their willingness to iterate on design simply incompatible with how Tesla operated. So Tesla brought it in-house. Not as a grand strategy. As a repeated, pragmatic response to the same problem: nobody outside can do what we need, at the speed we need it, the way we need it done.

The result, after 20 years of those decisions compounding, is the most vertically integrated major manufacturer in the automotive industry. Tesla designs its own chips, writes its own software, operates its own manufacturing lines, runs its own service centers, owns its own charging network, and trains its own AI. Each of those capabilities started as a frustration with an outside vendor and ended as a competitive advantage.

Terafab is that same story playing out one more time — but at a scale that dwarfs everything that came before it.

When a Barclays analyst asked on the Q1 call whether Terafab was really about generating leverage over chip suppliers — essentially getting better pricing by threatening to go in-house — Musk’s answer was characteristically direct: no. This is not a negotiating tactic. It is survival.

Tesla looks at its scaling roadmap for FSD, Cybercab, and Optimus and concludes that the entire global chip industry, as currently constituted, cannot produce enough chips fast enough to support where Tesla needs to go. Not at any price. The industry’s growth in logic chips is insufficient. In memory — the specific bottleneck that determines how capable AI models can become, the same memory bandwidth constraint that made HW3 obsolete — the gap is even more acute. Tesla anticipates hitting a hard supply ceiling within three to four years if it doesn’t manufacture chips itself.

That’s the primary motivation. Not economics. Not leverage. Supply survival.

The memory point deserves particular emphasis because it connects directly to the thread running through this entire earnings call. Why can’t HW3 do unsupervised FSD? Memory bandwidth. Why does AI advancement keep requiring new hardware generations? Memory bandwidth. The entire trajectory of autonomous driving improvement is fundamentally constrained by how fast AI chips can move data in and out of memory. When Musk says the industry isn’t keeping up on memory even more than on logic, he is identifying the precise bottleneck that determines how good FSD can get and how quickly. Controlling your own memory fabrication is therefore not peripheral to the FSD roadmap. It is central to it.

Terafab is a $20–25 billion joint venture between Tesla, SpaceX, and xAI — officially launched in March 2026 — designed to become the largest semiconductor fabrication facility ever built, targeting one terawatt of AI compute annually. At full capacity, that would represent roughly 70% of the current global output of TSMC, the world’s dominant chip foundry.

But scale alone is not the point. The architecture is.

Most chip production on earth separates the stages across multiple sites and companies. You design the chip in one place, create the lithography masks in another, fabricate the wafers at a foundry, package them elsewhere, and test them somewhere else entirely. Each handoff takes weeks. A full iteration cycle — design a chip, manufacture it, test it, find the problems, redesign — takes months. Sometimes longer.

Terafab puts every single one of those stages under one roof. Chip design. Mask creation. Logic fabrication. Memory production. Advanced packaging. Testing. All of it, in one building, on the North Campus of Giga Texas in Austin. As Musk noted on the call, and as independent reporting confirms, this capability does not currently exist anywhere else in the world.

What that means in practice is a compression of the chip iteration cycle from months to weeks or days. Tesla can design a new chip architecture in the morning, have wafers running by the afternoon, test the results, and revise the design — all without shipping anything anywhere. For a company whose competitive advantage depends on advancing AI faster than anyone else, this is not an incremental improvement. It is a different category of capability entirely.

And Musk went further. He mentioned almost in passing that Tesla has ideas for “maybe radically better AI chips” — new physics they want to test, long-shot research that a conventional external fab relationship would make nearly impossible to pursue. If even one of those long shots pays off — better memory bandwidth, lower power consumption, faster inference — that improvement stays proprietary inside Tesla’s stack. It doesn’t get fabbed at TSMC where other customers eventually access similar process improvements. It flows directly and exclusively into FSD, Cybercab, and Optimus. The iteration speed advantage and the potential for proprietary breakthroughs reinforce each other.

Tesla is partnering with Intel on this, using their 14A process — a 2-nanometer class node that represents the current frontier of semiconductor manufacturing. The partnership reduces dependence on TSMC and the geopolitical concentration risk that dependence carries.

Today the moat is: data scale plus cost structure plus vertical integration in vehicles and software. With Terafab, the moat extends to the silicon itself. Tesla would be designing its own chips, fabricating them in its own facility, training its own AI on its own supercomputers, deploying that AI in its own vehicles, operating its own robotaxi network, and iterating on all of it faster than any external dependency would allow.

There is one important caveat worth stating plainly. Musk acknowledged on the earnings call that the intercompany structure — Tesla doing the research fab, SpaceX handling the initial large-scale facility — creates genuine governance complexity. Any intercompany arrangement requires independent director review at both Tesla and SpaceX to ensure each company’s shareholders are being served fairly. Early reporting suggests approximately 80% of Terafab’s projected compute output is directed toward space applications for SpaceX, with only 20% for terrestrial uses like FSD and Optimus. That ratio deserves scrutiny. Tesla shareholders should watch carefully to ensure the capital Tesla is contributing to this joint venture generates returns that flow back to Tesla — not primarily to SpaceX.

That governance question is real and unresolved. But it doesn’t change the fundamental observation: if Terafab executes as described, Tesla’s vertical integration story stops being about cars and software and becomes something far larger — a company that controls its own AI hardware destiny from silicon to street. And unlike most of Tesla’s ambitious announcements, this one has a clear historical precedent. Every time Tesla has been forced by outside limitations to build something itself, it has ended up owning a capability its competitors have to buy. Terafab looks like the largest version of that pattern yet.

The Risks — And We Take Them Seriously

Intellectual honesty requires us to present the other side with equal weight, because the risks here are real.

Execution risk is the most significant: Tesla has been promising unsupervised FSD at scale since 2016. It is now 2026 and they are running dozens of cars in three cities. The pattern of promises followed by timeline slippage is undeniable and any investment thesis built on Tesla’s autonomous future must grapple honestly with this track record.

The hardware obsolescence cycle is a structural problem: As we analyzed from this earnings call, the same dynamic that stranded HW3 owners will likely repeat. AI4.5 is already shipping in new Model Ys. AI4 Plus is announced for 2027. Today’s Cybercab buyers are purchasing hardware that is already one generation behind. The conflict between Tesla’s AI advancement roadmap and its obligations to existing customers does not resolve neatly.

The customer trust deficit is real and compounding: Approximately 4 million HW3 owners were told definitively that their hardware would support full self-driving. It will not. Class action litigation is likely. The reputational cost of that broken promise, at scale, has not yet been fully absorbed.

Regulatory risk remains the wildcard: Unsupervised FSD has so far been deployed only in relatively manageable environments — Austin, Dallas, Houston. It has not yet been tested without a safety driver in the dense, unpredictable environments where autonomous vehicles face their hardest challenges — New York City, Chicago, adverse weather conditions at scale. A serious incident during the expansion phase could trigger regulatory responses that set the entire timeline back by years.

Musk dependency: Every layer of the moat we’ve described in this essay — the data flywheel, the iteration discipline, the Terafab ambition, the willingness to build what suppliers cannot — flows from a single founder’s vision and relentless execution. Elon Musk is not just the CEO of Tesla. He is the organizational force that has driven every major vertical integration decision, every hardware generation, every audacious bet. The risk is not that Musk is a distraction — it is that he is irreplaceable. No succession plan exists for the kind of first-principles thinking that built this moat. That is perhaps the biggest concentration risk in any large-cap company in the world today.

What We’re Watching

The key milestones we’re tracking closely:

V15’s arrival and what “major architectural improvements” actually means in practice. This is now the gating software factor for large-scale Robotaxi deployment and no timeline was given on the call.

The HW3 retrofit program — specifically whether Tesla announces a credible, affordable path for the 4 million affected owners or whether this becomes a prolonged legal and reputational problem.

Cybercab production ramp. Getting from “hundreds per week” to the tens of thousands needed to meaningfully impact transportation economics is a manufacturing challenge Tesla has navigated before — but not with a purpose-built robotaxi on a new platform.

Regulatory expansion beyond Texas. How quickly Tesla gets unsupervised FSD approved in states beyond Texas will determine whether the “dozen states by year end” target Musk mentioned is achievable.

The Bottom Line

There are no guarantees that Tesla will execute against its very audacious goals. The risks are real, the timeline history is humbling, and the competitive and regulatory landscape is genuinely difficult. Tesla already trades at a multiple that reflects enormous ambition — the market is not blind to the autonomous future.

What we are saying is that the architecture behind that future may be more defensible than even the current valuation implies. The data flywheel compounding by 30–40 million miles daily. The iteration asymmetry that builds capability internally at a pace that hardly shows up in quarterly results until it suddenly does. The vertical integration pattern — now extending to chip fabrication — that has turned every external frustration into a structural advantage that competitors cannot easily replicate.

Here is what makes this unusual: the people generating that data are doing so willingly. Tesla owners genuinely love their cars. FSD, for all its imperfections, remains the most capable assisted driving system available anywhere. Every mile driven is both a genuine product experience and a contribution to a training dataset that no competitor can replicate. That simultaneity — of value delivered and moat built — is what makes the architecture so durable.

We established a core position in Tesla in the first half of 2025 during maximum pessimism, as we laid out in our Q3 2025 letter. The pessimism has partially lifted. But the deeper thesis — data scale, cost structure, vertical integration, network effects, and now semiconductor fabrication — has only grown stronger. The moat is not yet visible in the numbers. It is visible in the architecture.

The quarterly earnings call is where investors often focus on the next couple of quarters. The training servers are where the long-term moat is actually being built. That gap between what is currently visible and what is really cooking under the hood is, in our view, where the investment opportunity lives.

For more of our writings and future updates on Rowan Street’s long-term investment journey, subscribe on Substack: rowanstreet.substack.com

DISCLOSURES

The information contained in this letter is provided for informational purposes only, is not complete, and does not contain certain material information about our fund, including important disclosures relating to the risks, fees, expenses, liquidity restrictions and other terms of investing, and is subject to change without notice. The information contained herein does not take into account the particular investment objective or financial or other circumstances of any individual investor. An investment in our fund is suitable only for qualified investors that fully understand the risks of such an investment. An investor should review thoroughly with his or her adviser the funds definitive private placement memorandum before making an investment determination. Rowan Street is not acting as an investment adviser or otherwise making any recommendation as to an investor’s decision to invest in our funds. This document does not constitute an offer of investment advisory services by Rowan Street, nor an offering of limited partnership interests our fund; any such offering will be made solely pursuant to the fund’s private placement memorandum. An investment in our fund will be subject to a variety of risks (which are described in the fund’s definitive private placement memorandum), and there can be no assurance that the fund’s investment objective will be met or that the fund will achieve results comparable to those described in this letter, or that the fund will make any profit or will be able to avoid incurring losses. As with any investment vehicle, past performance cannot ensure any level of future results. IF applicable, fund performance information gives effect to any investments made by the fund in certain public offerings, participation in which may be restricted with respect to certain investors. As a result, performance for the specified periods with respect to any such restricted investors may differ materially from the performance of the fund. All performance information for the fund is stated net of all fees and expenses, reinvestment of interest and dividends and include allocation for incentive interest and have not been audited (except for certain year end numbers). The methodology used to determine the Top 5 holdings is the largest portfolio positions by weight. The top 5 do not reflect all fund positions. The Top 5 can and will vary at any given point and there is no guarantee the fund will meet any specific level of performance. Net returns presented are net of fund expenses and pro-forma performance fees. Rowan Street Capital does not charge fixed management fees.

Comments